An extension of Metaio’s patent that was assigned to Apple following its acquisition of the company in 2015, Apple’s new patent application published Thursday by the United States Patents and Trademark Office (USPTO) details potential applications for augmented reality while illustrating a rumored head-mounted display Apple is said to be working on.

Titled “Method for representing points of interest in a view of a real environment on a mobile device and mobile device therefor”, the invention would enhance today’s two-dimensional maps by adding augmented reality points of interest to the mix.

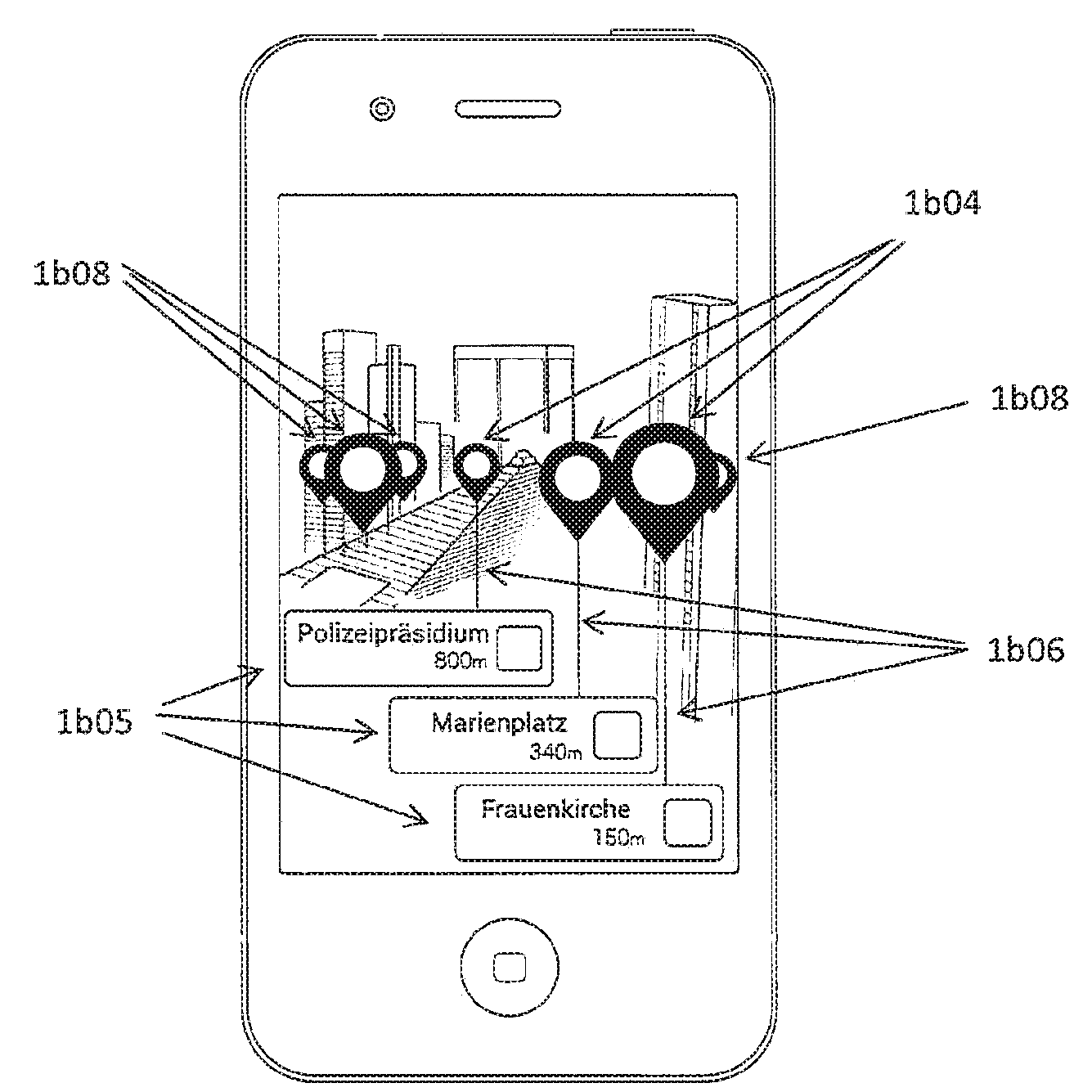

Enhancing a user’s real world by superimposing computer images of nearby points of interest on top of live camera feed would make for a nice ARKit-driven Maps app. This could be easily accomplished by fusing high-resolution data from the camera sensor with geolocation information gleaned from the GPS, compass and other onboard sensors.

Using latitude, longitude and altitude sensor data could allow an iPhone or iPad to figure out its position and heading relative to the surrounding environment, allowing the system to overlay interactive markers in their correct spots on top of the captured image.

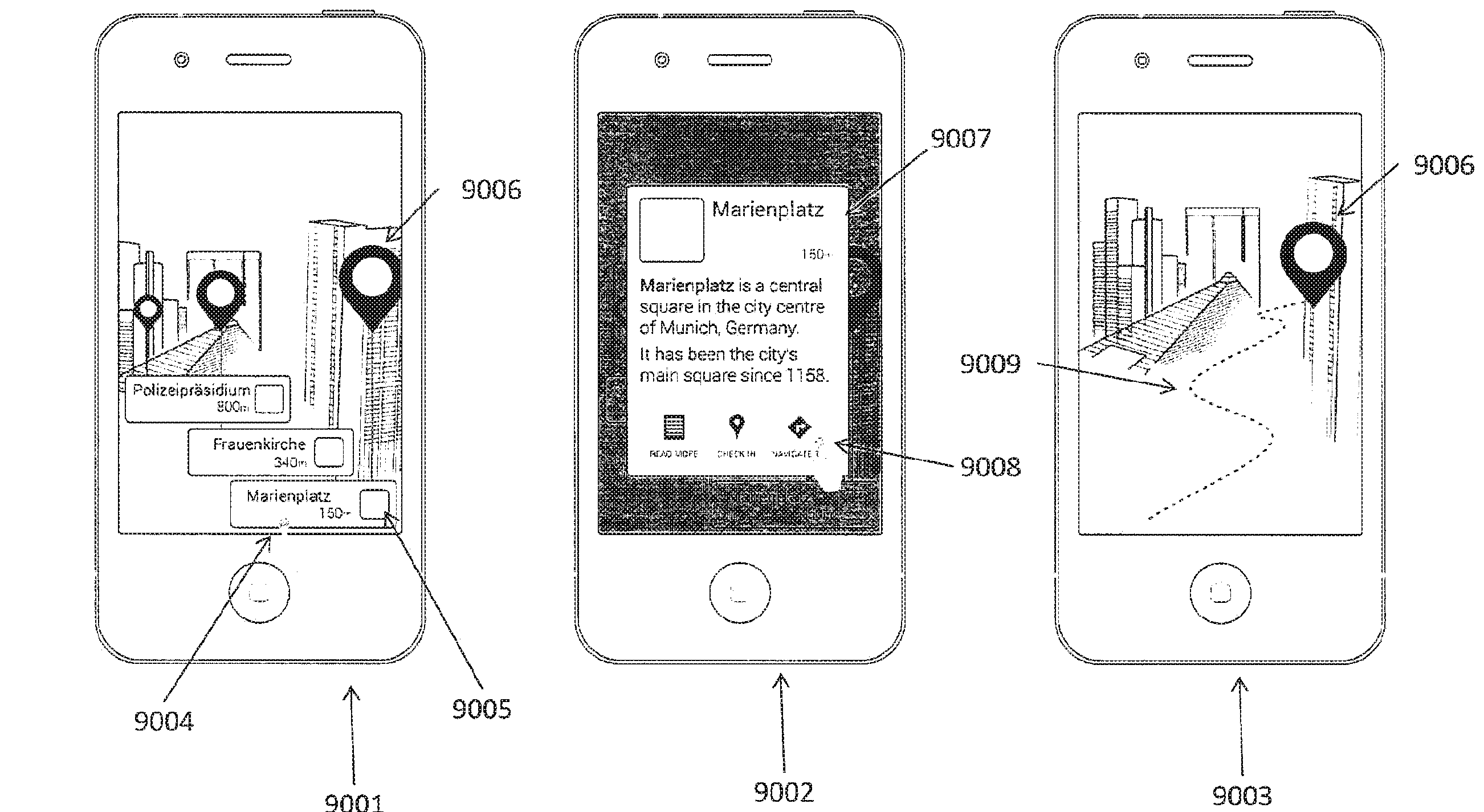

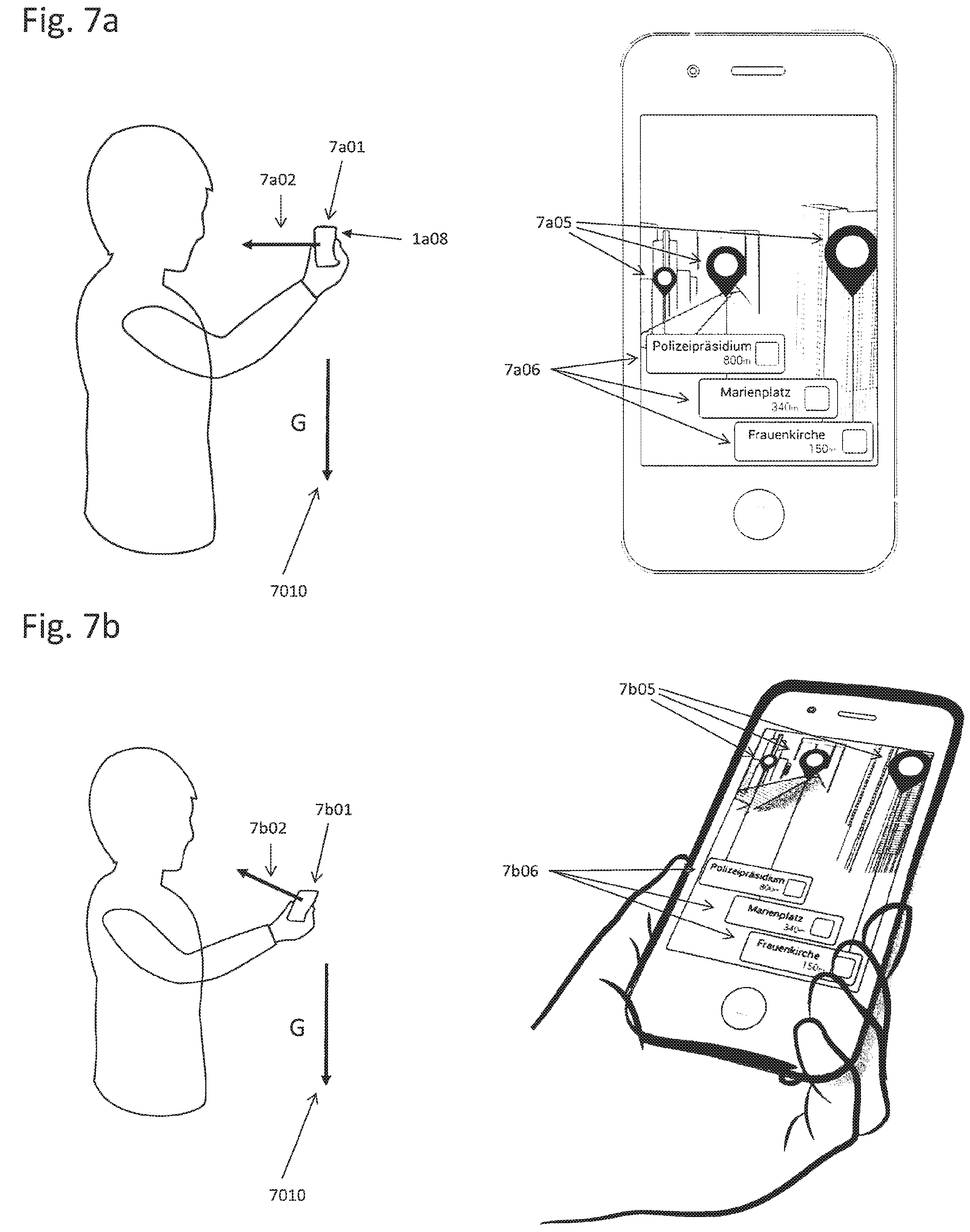

As we’ve seen with ARKit’s reliable and crazy accurate tracking, POI annotations could accurately follow user movements, allowing them to stay anchored to the real-world objects. The system would even work if a user held the device angled toward the ground by using a balloon interface to connect POIs to their real-world counterparts.

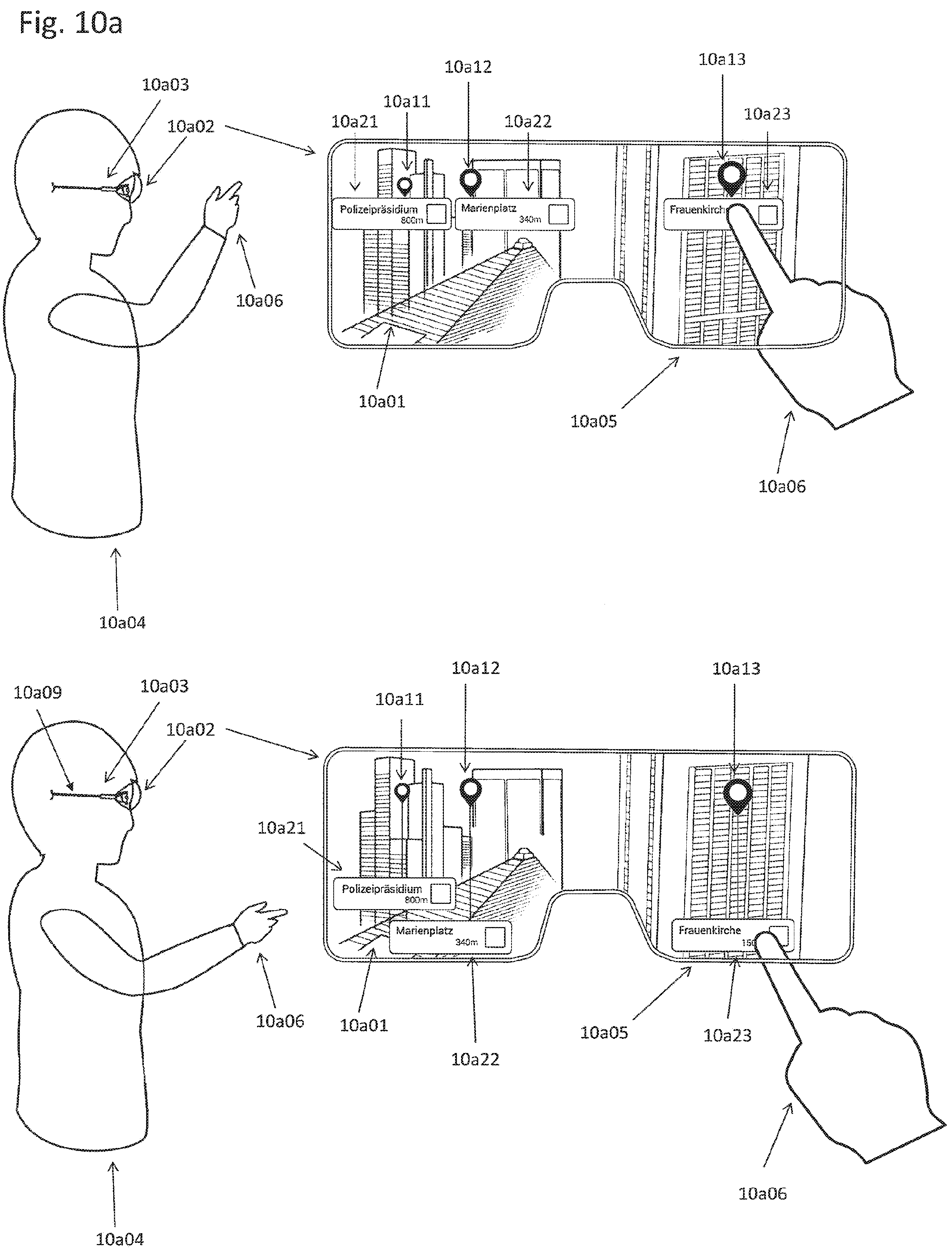

And should a user angle their device toward the ground beyond a certain threshold, the view would switch to a clear list view of the points of interest. The invention is particularly useful when using a head-mounted display comprising the camera and the screen.

From Apple’s patent description:

For example, the head-mounted display is a video-see-through head-mounted display. It is typically not possible for the user to touch the head-mounted screen in a manner like a touchscreen.However, the camera that captures an image of the real environment may also be used to detect image positions of the user’s finger in the image. The image positions of the user’s finger could be equivalent to touching points touched by the user’s finger on the touchscreen.

In fact, the patent application mentions both approaches: smartphone-only augmented reality and a hybrid solution that would render AR images on both your phone and headset, with an iPhone acting as interactive controller for the scene you see through the headset.

“The mobile device may perform an action related to the at least one point of interest if at least part of the computer-generated virtual object blended in on the semi-transparent screen is overlapped by a user’s finger or device held by the user,” notes Apple.

The invention was first filed for in April 2017 and credits engineers Anton Fedosov, Stefan Misslinger and Peter Meier as its inventors.